An MCP for CaptainDNS?

By CaptainDNS

Published on November 21, 2025

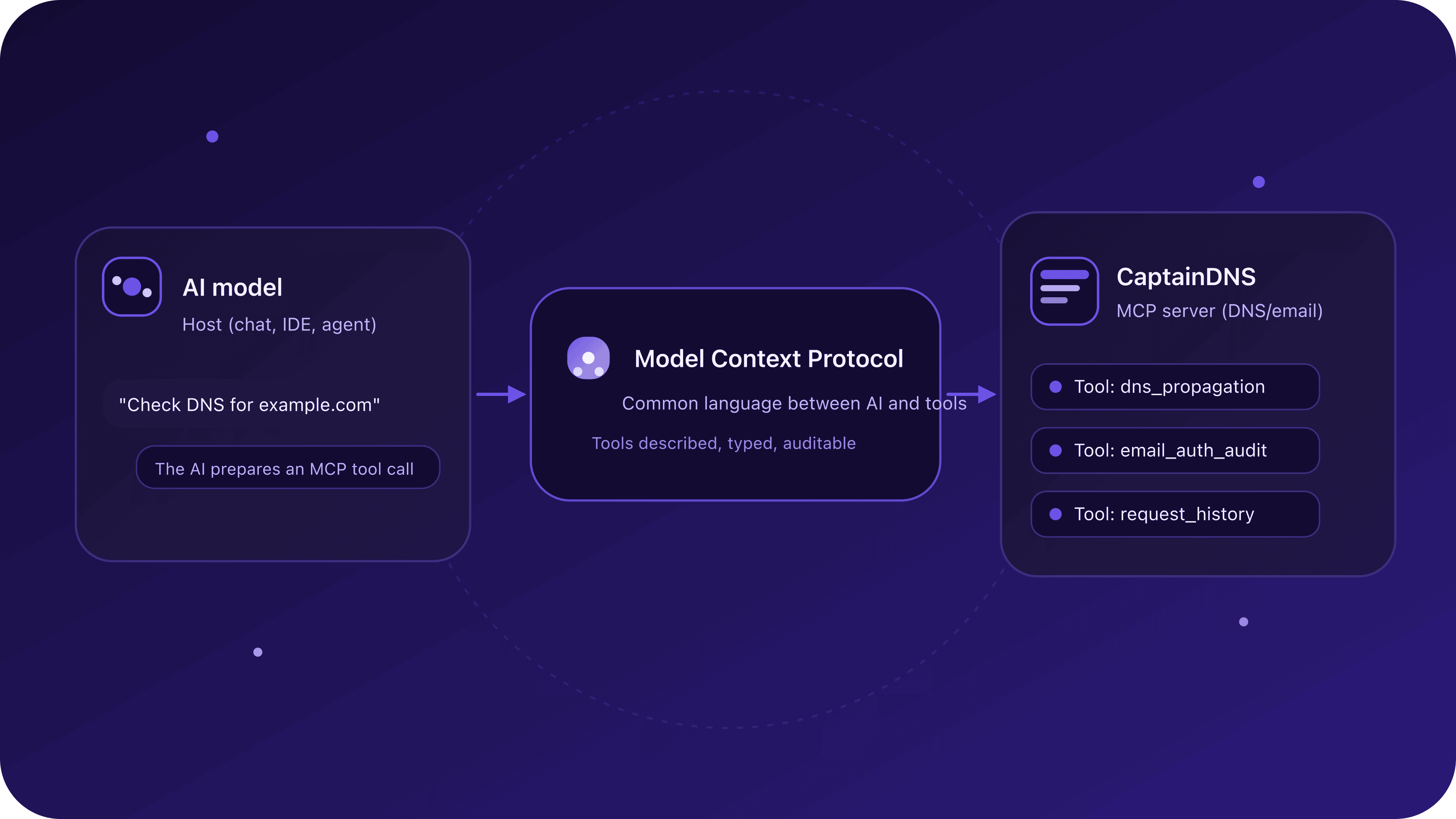

The Model Context Protocol (MCP) is an open protocol that lets an AI talk to external tools (APIs, databases, SaaS services) in a standardized way.

- It defines a common language between models and tools (the "MCP servers").

- It keeps you from rebuilding a different integration for every AI provider.

- It opens the door to real agents that can call CaptainDNS on their own.

This article sets the groundwork: what an MCP is, what it is used for, and why CaptainDNS decided to build one. The next articles will dive into architecture, technical choices and first concrete use cases, with an early-access track for users who want to try it.

Why bring up MCP before writing a line of code?

CaptainDNS is already a very specialized tool: DNS, email, propagation, monitoring.

An MCP, on its own, does "nothing": it is a dialogue layer between an AI and tools.

Before wiring one to the other, it is helpful to ask a few simple questions:

- What exactly is an MCP?

- Is it a universal standard or just the latest hype?

- Does everyone need it, or only some profiles (DevOps, SRE, deliverability teams, etc.)?

- And above all: what could it really change for a tool like CaptainDNS?

This article is the base for the rest: if you get what happens here, the following pieces (architecture, technical choices, demos) will make a lot more sense.

What is the Model Context Protocol (MCP)?

A protocol so AI can talk to your tools

The MCP (Model Context Protocol) is a protocol that defines how:

- a host (an application embedding an AI model, such as a ChatGPT client, an IDE or an internal agent),

- connects to one or more MCP servers ("tools" exposed via MCP: an API, a database, a service like CaptainDNS),

- and exchanges structured requests (in practice JSON) to execute actions.

You can see it as:

a standard plug to connect an AI to tools, instead of a set of proprietary cables for each provider.

The gist:

- the AI talks in natural language with the user,

- but as soon as it needs to act (query DNS, read a database, call an API), it goes through tools exposed via MCP.

Three roles to remember

For the rest of the series, keep this trio in mind:

- The host: the application that hosts the model (ChatGPT, an IDE, an internal agent, etc.).

- The MCP client: the layer in that host that knows how to speak MCP.

- The MCP server: the service exposing tools the AI can use.

In our case, the MCP server will be CaptainDNS, seen from the AI as:

- a DNS/email tool, reachable through a standard protocol,

- with tools like

dns_lookup,dns_propagation,email_auth_audit, etc.

Examples of what an MCP enables

The MCP is not limited to CaptainDNS. You can imagine MCP servers for almost anything:

-

An MCP for a database The AI can list tables, run prepared SQL queries, analyze reports, without directly exposing database credentials.

-

An MCP for a DevOps tool The AI can trigger deployments, read logs, check service status, browse dashboards, etc.

-

An MCP for a CRM or a business tool The AI can find a customer, list contracts, check ticket history, offer a summary for support.

-

An MCP for a filesystem or object storage The AI can explore a file space, read some documents, generate summaries, without leaving the controlled environment.

What changes compared to "classic" integrations is that:

- the model is no longer locked in a simple chat window,

- it becomes a sort of coordinator of tools, able to chain several actions:

- call a "database" MCP,

- then a "monitoring" MCP,

- then a "ticketing" MCP,

- and finally produce a consistent report.

What is MCP really useful for? (and where it shines)

1. Standardize AI ↔ tools integrations

Without MCP, every integration looks like:

- a different JSON shape,

- a slightly different protocol,

- a specific way to declare tools for each provider (OpenAI, other LLMs, etc.).

With MCP:

- you write a single MCP server,

- compatible with several hosts (as long as they speak MCP),

- which avoids rebuilding the same integration three times.

For a SaaS like CaptainDNS, that is key:

one well-designed MCP connector can, in theory, talk to multiple AI ecosystems, current and future.

2. Let agents work "on their own" (with guardrails)

Another benefit of MCP is being agent-friendly.

An AI agent can:

- analyze the user's request,

- decide which MCP tools to call,

- plan several calls, run them, analyze results,

- and only come back to the user with a diagnosis or an action plan.

The protocol provides a framework:

- tools are precisely described (name, parameters, types),

- the host can ask for user confirmation before certain calls (for sensitive operations),

- everything can be logged and controlled.

3. Isolate and secure access to systems

MCP is not magic cybersecurity, but it helps to:

- limit what the AI can do:

expose a read-only, very limited tool rather than the raw API. - separate environments:

one MCP for prod, another for preprod, another for a given tenant. - control permissions:

for example, a user could let the AI read CaptainDNS queries but not create new probes.

Is MCP "universal"?

MCP aims to be an open standard, not the single protocol for the universe.

Concretely:

- It is meant to be agnostic of the AI provider.

- It should be reusable by several types of apps (chat, IDE, autonomous agents, etc.).

- It does not prevent other approaches from coexisting (proprietary plugins, direct APIs, webhooks, etc.).

You can see it as:

a common base for tools that want to be "AI-ready" without betting everything on one ecosystem.

CaptainDNS will build on that base without giving up the web UI or the existing API. MCP comes on top, for those who want to go further.

Why an MCP for CaptainDNS?

The real question of this post: what does it bring, specifically, to a DNS and email-oriented tool like CaptainDNS?

Here are a few tracks that will guide the build.

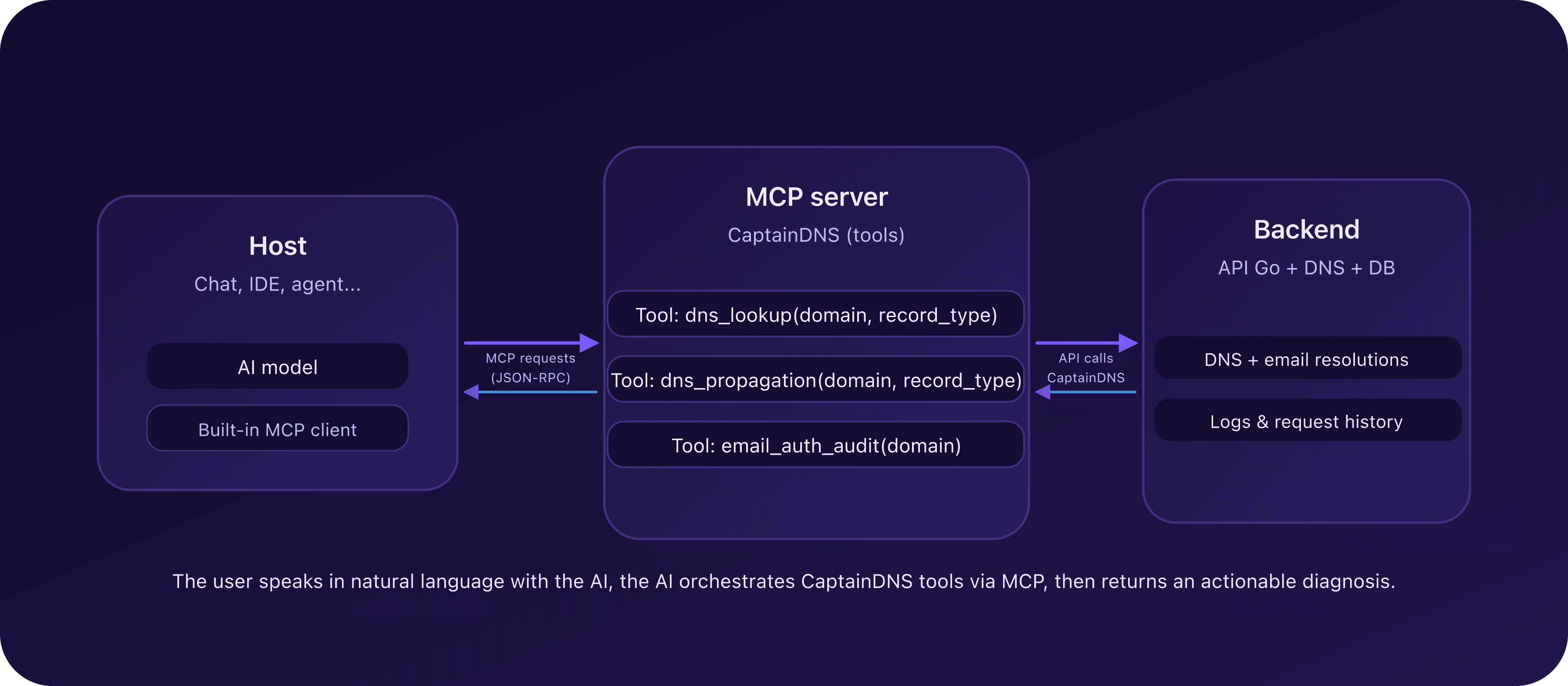

On the CaptainDNS side, the MCP sits between the AI host and the existing Go API:

1. A DNS/email copilot inside your usual chat

Picture this:

Check the MX propagation for captaindns.com and tell me if there is a risk of lost mail.

The AI agent could:

- call a

dns_propagationtool exposed by CaptainDNS via MCP, - fetch a multi-resolver view,

- answer with:

- lagging resolvers,

- disagreeing IPs,

- a risks summary.

Same story for email:

Check SPF, DKIM and DMARC for captaindns.com and explain what might block at Microsoft.

The AI then calls email_auth_audit and returns an understandable explanation instead of a block of TXT.

2. Faster incident troubleshooting

When incidents happen:

- a domain no longer resolves properly,

- a DNS failover does not propagate,

- emails stop landing at some providers.

The agent can:

- review the CaptainDNS request history (via a

request_historytool), - rerun targeted tests (lookup, propagation),

- correlate results,

- propose an initial diagnosis to the human (SRE, admin, deliverability consultant, etc.).

CaptainDNS does not replace expertise, but MCP lets it pre-chew a good chunk of the work.

3. Integrate into existing pipelines and workflows

A CaptainDNS MCP could also be used by:

- CI/CD pipelines,

- internal workflows,

- team assistants (SRE, SecOps, support).

For example:

- after a DNS zone deployment, an AI agent can check:

- that the expected A/AAAA records respond everywhere,

- that DMARC stays consistent,

- and that no MX disappeared.

All of it using CaptainDNS tools instead of scattered homemade scripts.

What is coming in the next articles

This first article set the stage. The next ones will get more technical and concrete.

On the menu:

-

CaptainDNS MCP architecture

How the CaptainDNS "MCP server" will be structured, how it will talk to the existing API, which transport and auth choices will be made. -

Tool design

Which initial tools will be exposed (dns_lookup,dns_propagation,email_auth_audit,request_history, etc.), with their parameters and responses. -

Guardrails and security

How to limit what the AI can do, handle API keys, trace requests, isolate environments. -

Concrete demos

End-to-end scenarios: "email incident", "DNS migration", "new domain setup", driven in natural language but executed via CaptainDNS.

CaptainDNS will document architecture choices, tradeoffs and iterations as the build progresses.

And once a stable core is ready, there will be an early preview for users who want to try the MCP in their own environments.

MCP glossary

MCP (Model Context Protocol)

Protocol that defines how an AI model can interact with external tools (APIs, databases, services) via "MCP servers".

It standardizes how tools are described, parameters, responses, and the dialogue with the host.

Host

Application that contains the AI model and serves as the user interface:

- chat application,

- IDE,

- autonomous agent,

- internal company tool, etc.

The host decides when and how MCP tools are called.

MCP server

Service that exposes tools the host can use via MCP.

In the CaptainDNS context, the MCP server will expose DNS/email tools (dns_lookup, dns_propagation, etc.) in MCP format.

Tool

Specific action exposed by the MCP server, with:

- a name (

dns_lookup), - structured parameters (

domain,record_type, etc.), - a response schema.

The AI model can call it when needed to answer the user.

LLM (Large Language Model)

Large language model able to:

- understand natural language instructions,

- generate text,

- plan actions (like calling MCP tools),

- explain its results.

The LLM itself does not directly "see" the database or DNS: it goes through tools.

JSON-RPC

Lightweight JSON-based request/response protocol (a bit like an API, but standardized for structured exchanges).

MCP reuses a JSON-RPC variant so host and MCP servers can talk predictably.

Frequently asked questions about MCP

Do I need an MCP to use an AI?

No. You can use an AI without MCP, only through a classic chat interface.

MCP becomes interesting when you want to connect the same AI to several tools, or let agents chain actions reliably.

Do I need an MCP to use CaptainDNS?

Also no. CaptainDNS stays fully usable via its web interface and API.

MCP is a complement for users who want to plug CaptainDNS into AI assistants or automated workflows.

Do I have to be a developer to benefit from an MCP?

Not necessarily. The initial setup (plugging MCP into a host, configuring auth) is easier if a developer or DevOps handles it.

But once in place, a well-designed MCP lets less technical profiles drive complex actions in natural language.

Is MCP tied to a specific AI provider?

The goal of MCP is precisely to stay agnostic regarding the AI provider.

One MCP server can, in theory, be used by several hosts and several models without rewriting the integration each time.

Does MCP replace plugins or classic APIs?

No. APIs remain the foundation. MCP often leverages existing APIs and serves as an orchestration layer tailored to AI models.

Think of it as a cleaner, more portable way to expose features to an AI ecosystem.

Related MCP and CaptainDNS guides

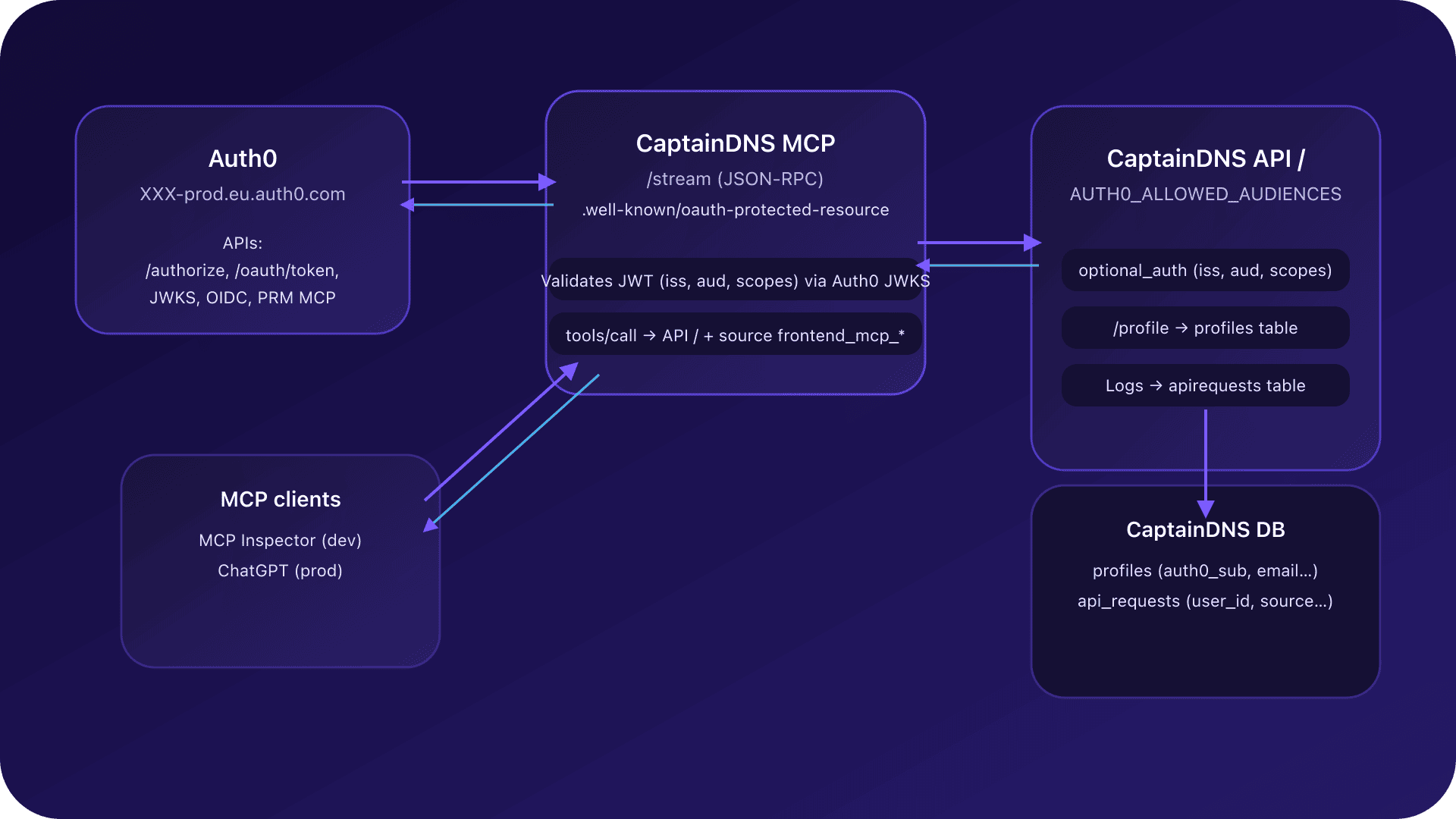

- CaptainDNS MCP Architecture - Dive into the technical details of the MCP server.

- Auth0 + MCP Integration - OAuth2 authentication for the CaptainDNS MCP.

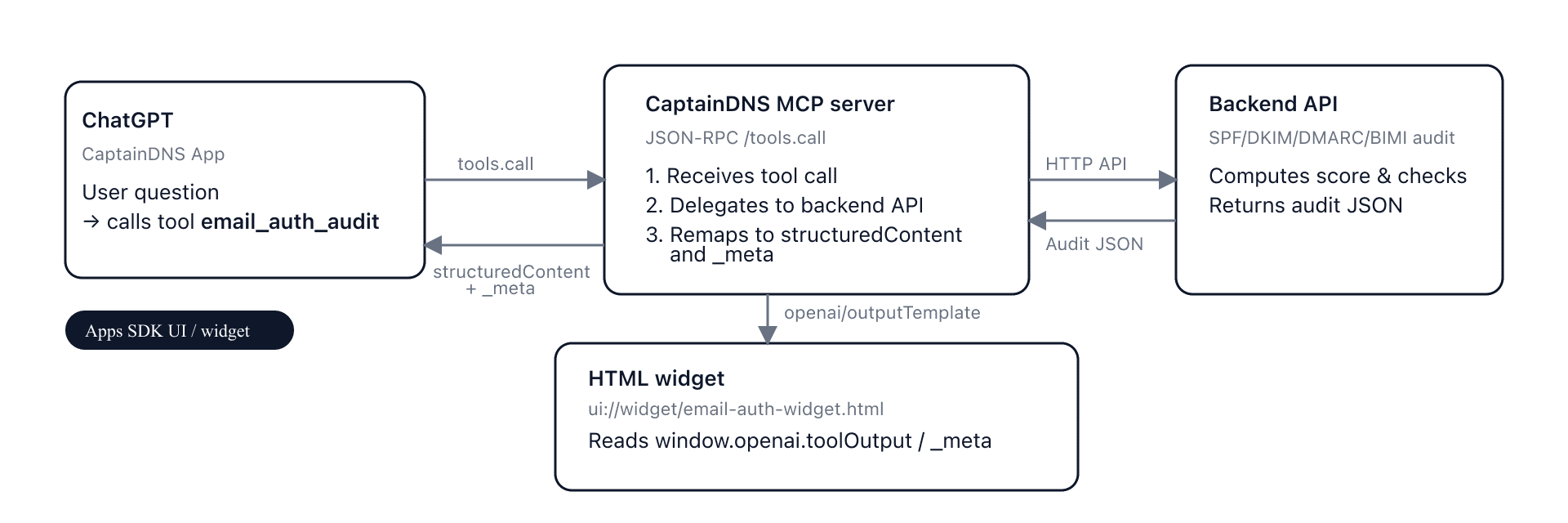

- MCP Widgets for ChatGPT - How ChatGPT widgets leverage the CaptainDNS MCP.

- Email Audit Widget in ChatGPT - Implementation of the email_auth_audit widget.

- Resilient DNS Monitoring - Building multi-layer DNS surveillance.

- History and DNS Monitoring - Diagnose, archive, share, then monitor.